The AI Agent action in Automation now uses fewer tokens per execution without reducing output quality. Because usage is billed per token, this can lower cost and make it easier to scale AI-powered workflows.

What Changed

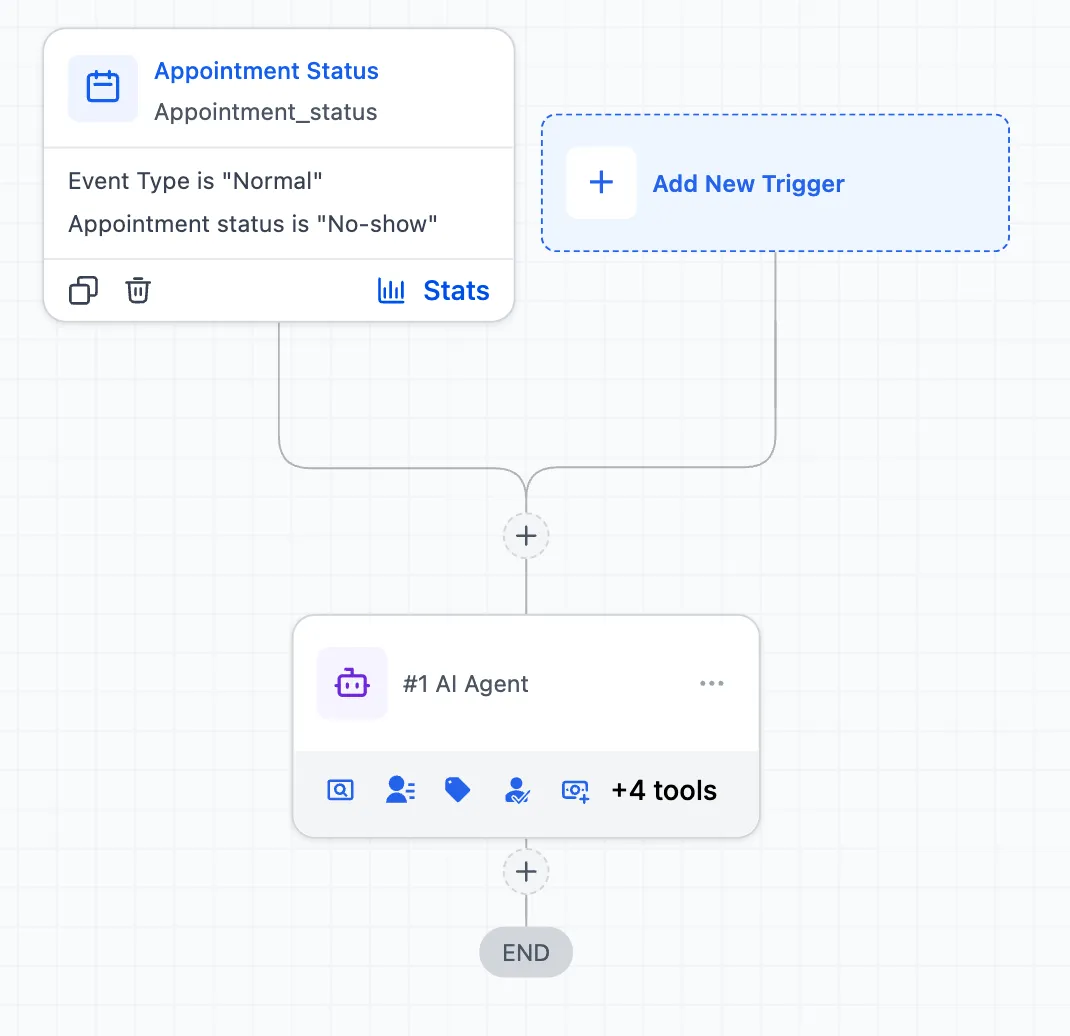

Several optimizations were made to how the AI Agent action sends context to the model:

- Cleaner context: Duplicate data, internal system data, redundant contact details, and unnecessary workflow metadata are no longer sent on every turn.

- Smarter tool responses: Only relevant fields such as name, email, and phone are passed to the model instead of large sets of unrelated record fields.

- Conversation memory management: Long-running agents now summarize older conversation steps while keeping recent steps in full detail.

- Structured output optimization: The final extraction step no longer repeats the full context, which reduces token usage further.

Results

Tested on the same workflow and contact with no reported quality loss:

- First LLM call: 36% token reduction

- Total execution: 20% token reduction

Why This Matters

Each workflow run is more efficient, which can help reduce usage-based AI cost while preserving output quality. For teams using AI Features inside automations, this creates more room to run and scale AI processes without increasing token usage at the same rate.

In Case You Missed It

You can also read about the Inbound Email Trigger for Workflows.

Need Help Applying This Update?

If you’d like help rolling this out in SMBcrm, visit Support or request a demo.